Meta Is Back: Inside Muse Spark and the $14B Alexandr Wang Bet

Highlights of AI News for April 06 -12 2026

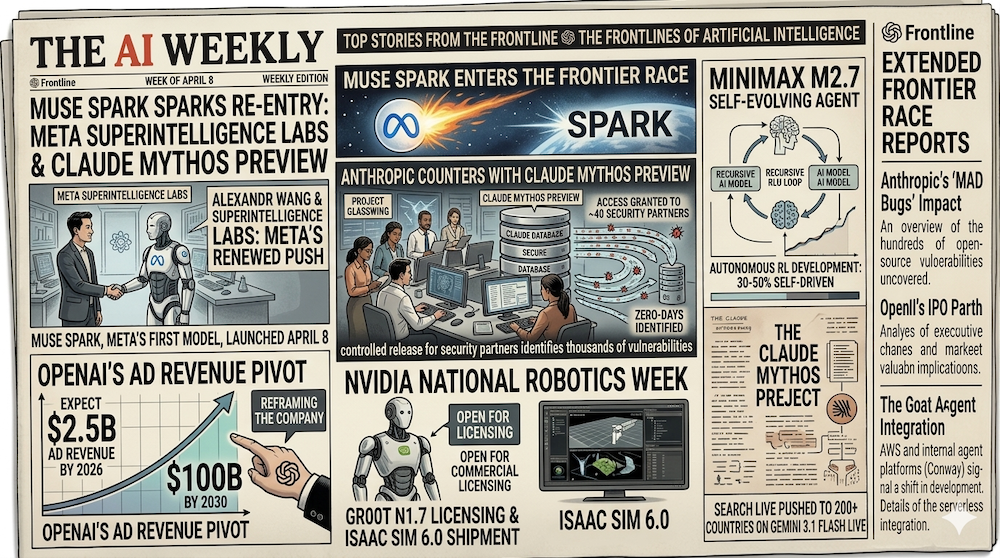

Week in Review | Meta re-entered the frontier race on April 8 with Muse Spark, the first model out of its new Superintelligence Labs under Alexandr Wang. Anthropic countered the same week with a controlled release of Claude Mythos Preview under Project Glasswing, granting ~40 security partners early access to a model that has already identified thousands of zero-days. OpenAI told investors to expect $2.5B in ad revenue in 2026 and $100B by 2030, reframing what the company is. MiniMax released M2.7 under an open-weight (not open-source) license, a self-evolving agent model that autonomously drove 30–50% of its own RL development. NVIDIA's National Robotics Week opened GR00T N1.7 for commercial licensing and shipped Isaac Sim 6.0. Tufts researchers showed neuro-symbolic AI cutting robot energy use by up to 100×. HumanX 2026 drew enterprise CIOs to Moscone South, and state legislatures kept grinding out chatbot and healthcare AI laws.

Watch our video: on Youtube or Rumble

The Big Story: Meta Re-Enters the Frontier Race with Muse Spark

On April 8, Meta unveiled Muse Spark, the first model to ship out of its newly formed Meta Superintelligence Labs and the first meaningful frontier release from the company since the Llama line started to fall behind. Code-named Avocado internally, the model was built over nine months by a team led by Alexandr Wang, the former Scale AI CEO Meta acquired in a $14B deal last year specifically to rebuild the company's AI leadership.

Muse Spark is natively multimodal and accepts voice, text, and image inputs. It supports tool use, visual chain-of-thought, and — notably — multi-agent orchestration: Meta AI can now spawn parallel subagents to tackle different facets of a query simultaneously. On the Artificial Analysis Intelligence Index, Muse Spark scores 52, placing it fourth behind only Gemini 3.1 Pro, GPT-5.4, and Claude Opus 4.6 — and well ahead of every previous Llama-branded model. The model is powering queries in the Meta AI app and meta.ai website immediately, with staged rollout across Facebook, Instagram, and WhatsApp to follow.

The reframe is what matters. Meta spent 2024–2025 looking like it had permanently ceded the frontier to OpenAI, Anthropic, and Google. Muse Spark is the first concrete evidence that the Superintelligence Labs reorg is producing research, not just org charts. If the trajectory holds, the Big Four becomes a Big Five again, and every competitive calculation — pricing, talent acquisition, distribution — shifts.

Why it matters: Muse Spark validates the bet that deep pockets plus a high-leverage hire can restart a stalled research org in under a year. That's a template other incumbents with AI ambitions — Apple, Amazon, Oracle, Tencent — will study closely. The frontier is not as structurally closed as it looked six months ago.

Anthropic's Project Glasswing: Turning the Most Dangerous Model into a Defensive Tool

On April 7, Anthropic did something unusual: it announced a model it is deliberately not releasing broadly. Claude Mythos Preview, the successor to Opus 4.6 that Anthropic has been developing quietly since January, is strikingly capable at computer security tasks — in internal testing, it identified and exploited vulnerabilities in over 80% of cases, and has already catalogued thousands of zero-day flaws across every major operating system and browser.

Rather than ship it to the API, Anthropic launched Project Glasswing, a controlled-access program giving the model to roughly 40 critical infrastructure partners — including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, and NVIDIA — along with select open-source maintainers. The explicit goal: let defenders patch critical systems before comparable capabilities become widely available. Mythos Preview has already discovered a 17-year-old remote code execution vulnerability in FreeBSD's NFS implementation, tracked as CVE-2026-4747, that grants root on any vulnerable machine.

The move is a sharp course-correction from Anthropic's recent operational stumbles — March's Mythos code-name leak and the subsequent exposure of ~500K lines of Claude Code source. Rather than ship defensively, Anthropic is shipping selectively. Simon Willison called it "the most responsible thing a frontier lab has done in a while," and the industry response has been notably positive.

Why it matters: Glasswing is the first real test of the "defense-first release" playbook for capabilities that could enable mass cyberattacks. If it works — if partner labs actually ship patches faster than adversaries can replicate the capability — it becomes a template for future dual-use releases. If it fails, we'll learn that quickly too.

OpenAI Becomes an Ad Company (Eventually)

Reports surfaced on April 9 that OpenAI has told investors to expect $2.5B in advertising revenue in 2026, scaling to $11B in 2027, $25B in 2028, $53B in 2029, and $100B by 2030. The projection assumes OpenAI's products reach 2.75B weekly users by decade's end. The ChatGPT ad pilot that launched in January has already generated $100M in annualized revenue over six weeks, with more than 600 advertisers.

The numbers matter less than the framing. OpenAI is a subscription-and-API business today; by 2030, on this trajectory, it would be a platform-and-ads business with subscriptions as the secondary line. That's a fundamentally different company — one whose incentives, data practices, and product surface area diverge sharply from Anthropic's ad-free posture. It also lays the groundwork for the potential IPO the company has floated for late 2026: investors want multiple scaling revenue lines, not just enterprise subscriptions.

Why it matters: Once OpenAI depends on advertising, the product decisions follow: what gets shown, what gets ranked, what data leaves the session. We've seen this script before with search and social. The AI-assistant category is about to inherit it.

MiniMax M2.7 Goes Open-Weight and Self-Evolving

On April 12, MiniMax released M2.7, an MoE agent model that pairs competitive benchmarks with a credible claim to self-improvement. On SWE-Pro, M2.7 scores 56.22%, matching GPT-5.3-Codex. On Terminal Bench 2 it hits 57.0%, and NL2Repo clocks in at 39.8%. These are frontier-adjacent numbers for a model you can download and run.

The headline claim is self-evolution. Over 100+ autonomous iterative rounds, M2.7 drove 30–50% of its own reinforcement-learning development workflow — reading logs, debugging, analyzing failure trajectories, modifying scaffold code, running evaluations, and deciding whether to keep or revert changes. This is the first major publicly-available agent release that meaningfully operationalizes the self-improvement loops the field has been theorizing about for two years.

A licensing clarification worth flagging. Initial coverage, including MiniMax's own launch posts, described M2.7 as "open-source." That framing was corrected within days — by a community note on X and by MiniMax's own clarification — after the license surfaced that commercial use requires prior written authorization from MiniMax, with mandatory "Built with MiniMax M2.7" attribution. Non-commercial use — research, personal projects, private fine-tunes — remains permissive. MiniMax's head of developer relations attributed the terms to hosting providers shipping degraded versions under the MiniMax name on previous releases. The accurate label, reflected in Hugging Face discussions and legal analysis on X, is open-weight, not open-source.

Why it matters: If independent replications confirm the self-evolution claims, the compute-to-capability curve for open-weight models just got a lot steeper. Frontier labs were the only ones with the headcount to run these loops at scale. They may no longer be — though the commercial-use restriction means serious enterprise adopters will still need to negotiate terms.

NVIDIA's National Robotics Week: GR00T Goes Commercial

Timed to National Robotics Week (April 5–12), NVIDIA opened commercial licensing for GR00T N1.7 — the first production-ready tier of its humanoid-robot foundation model family — and announced GR00T N2, the next-generation foundation model built on DreamZero research that more than doubles task-success rates versus leading vision-language-action baselines. The company also shipped Isaac Sim 6.0, Isaac Lab 3.0, and the 1.0 release of its open-source Newton physics engine, which provides fast collision detection and contact simulation for dexterous manipulation.

Adoption signals are real: ABB, FANUC, and KUKA — three of the largest industrial robot makers in the world — have publicly committed to the stack. The Cosmos world-model family, which generates synthetic training data, rounds out the release. Together these are the most coherent "full-stack physical AI" offering any vendor has shipped, and they arrived the same week our Spatial Architectures tutorial argued that geometric inductive biases are about to become non-optional for robotics.

Why it matters: The robotics AI stack is consolidating faster than the LLM stack did. If GR00T becomes the default foundation model for humanoid platforms in 2026 the way CUDA became the default for training in 2015, NVIDIA locks in another decade-long moat — this time in physical AI.

Research Corner: Neuro-Symbolic AI Cuts Robot Energy Use by 100×

The most interesting research story of the week came out of Matthias Scheutz's lab at Tufts. In work published April 5 and widely covered through the week, the team showed that a neuro-symbolic vision-language-action architecture — combining a neural perception front-end with explicit symbolic reasoning over tasks — reached a 95% success rate on Tower of Hanoi, against just 34% for a comparable purely neural baseline. Training collapsed from 36+ hours to 34 minutes, and the neuro-symbolic model used 1% of the energy during training and 5% during inference. The work is headed to ICRA in Vienna in May.

This lands squarely in our W15 theme. The trend tutorial we published this week, Beyond Attention — Post-Transformer Architectures for Physical AI, argued that the Transformer is not the final answer for embodied reasoning and that the field is actively diversifying: SSMs, equivariant networks, capsules. Neuro-symbolic architectures are another strand of that same story — structured priors beat brute-force scale when the domain has exploitable structure. Our Spatial Architectures tutorial and its companion notebook make the same argument empirically: invariant features generalize across rotations with 0% accuracy drop, while raw-coordinate MLPs lose 88.7%. The academic and engineering communities are converging on the same diagnosis from different angles.

Also shipped this week: Vision Transformers Explained, a beginner-friendly walkthrough of ViT's patch-embedding design, and the SSM vs. Attention notebook that builds both architectures from scratch in pure NumPy.

Why it matters: The "more compute, more data" playbook is starting to break on energy economics. If neuro-symbolic results hold at scale, the 2027 architecture debate is not Transformer vs. Mamba — it's whether monolithic networks are the right unit at all.

HumanX 2026: Enterprise AI Confronts the ROI Question

HumanX 2026 ran April 6–9 at Moscone South in San Francisco. The speaker list was deep — Matt Garman (AWS), Fei-Fei Li (World Labs), Ali Ghodsi (Databricks), Katrin Lehmann (Mercedes-Benz), Al Gore on climate and AI — but the through-line of the conference was harder-edged than last year's. Q1 2026 was the first quarter where most enterprise AI deployments had been running long enough to produce honest ROI numbers, and the panels reflected that: cost management, model selection, fine-tuning versus foundation models, vendor lock-in, and how to avoid the $100K pilot that never ships.

World models and embodied AI — still research-lab topics a year ago — featured prominently in enterprise-track sessions, a signal that the market is ready to move past language-only deployments.

State AI Regulation Keeps Grinding Forward

While federal AI policy under the second Trump administration continues to emphasize innovation over guardrails, the states are not waiting. This week alone, chatbot-disclosure bills were signed into law in Oregon and Idaho, and Tennessee signed a targeted healthcare-AI bill. That follows Texas's TRAIGA (effective January 1) and Colorado's broader AI Act (effective June 30). The resulting patchwork is becoming a real compliance burden for national deployers — one that, per several HumanX panels, is now a line item in enterprise AI procurement.

Why it matters: Companies shipping consumer-facing AI products in the U.S. now need state-by-state compliance matrices, not a single federal posture. That's a cost and a slowing force on national rollouts — which the EU AI Act's full applicability on August 2, 2026 will amplify internationally.

By the Numbers

- 52 — Muse Spark's score on the Artificial Analysis Intelligence Index, placing it fourth behind Gemini 3.1 Pro, GPT-5.4, and Claude Opus 4.6.

- ~40 — Organizations granted early access to Claude Mythos Preview under Project Glasswing.

- 80%+ — Internal success rate at which Mythos Preview identifies and exploits known software vulnerabilities.

- 17 years — Age of the FreeBSD NFS remote code execution vulnerability (CVE-2026-4747) that Mythos Preview found autonomously.

- $2.5B — OpenAI's projected 2026 advertising revenue.

- $100B — OpenAI's projected 2030 advertising revenue.

- $100M — Annualized revenue from the six-week-old ChatGPT ad pilot, across 600+ advertisers.

- 56.22% — MiniMax M2.7 score on SWE-Pro, matching GPT-5.3-Codex.

- 30–50% — Share of its own RL development workflow that M2.7 drove autonomously over 100+ iterative rounds.

- 100× — Energy reduction during training for the Tufts neuro-symbolic VLA versus pure neural baselines.

- 95% vs 34% — Tower of Hanoi success rate for neuro-symbolic vs. pure-neural reasoning systems in the Tufts paper.

- 34 min vs 36+ hr — Training time for the neuro-symbolic model vs. the neural baseline.

- 2×+ — Task-success improvement of NVIDIA GR00T N2 over leading vision-language-action baselines.

- 3 — Major industrial robot makers (ABB, FANUC, KUKA) publicly committing to NVIDIA's robotics stack.

What to Watch Next Week

- DeepSeek V4 public launch. Reuters and multiple industry sources point to a late-April drop — 1T total parameters, ~32–37B active per token, running on Huawei Ascend chips. If it ships inside the strict window, this is next week's Big Story.

- Muse Spark rollout across Meta surfaces. Watch for availability dates across Instagram, WhatsApp, Ray-Ban Meta glasses, and the Threads app. The deployment pace tells us how production-ready the model actually is.

- Project Glasswing first disclosures. Expect the first patch announcements from Mythos-assisted partners — AWS, Microsoft, and CrowdStrike are the most likely to go first. The quality of the CVE flow will determine how this narrative is read.

- ChatGPT ad-rollout expansion. OpenAI will need to show the ad pilot can scale beyond free-tier U.S. users without tanking retention.

- EU AI Act readiness. With full applicability landing August 2, expect more implementation guidance and a wave of enterprise compliance announcements.

- CVPR 2026 runup. The 3D-LLM/VLA Workshop paper deadline is April 26, and submissions should start surfacing as preprints.

All References

- Introducing Muse Spark: Scaling Towards Personal Superintelligence — Meta AI (April 8, 2026)

- Introducing Muse Spark: Meta's Most Powerful Model Yet — Meta Newsroom (April 8, 2026)

- Meta debuts the Muse Spark model in a 'ground-up overhaul' of its AI — TechCrunch (April 8, 2026)

- Meta debuts Muse Spark, first AI model under Alexandr Wang — Axios (April 8, 2026)

- Meta debuts new AI model, attempting to catch Google, OpenAI after spending billions — CNBC (April 8, 2026)

- Muse Spark: Meta is back in the AI race — Artificial Analysis (April 8, 2026)

- Meta's new model is Muse Spark — Simon Willison (April 8, 2026)

- Claude Mythos Preview — Anthropic (April 7, 2026)

- Project Glasswing: Securing critical software for the AI era — Anthropic (April 7, 2026)

- Anthropic debuts preview of powerful new AI model Mythos in new cybersecurity initiative — TechCrunch (April 7, 2026)

- Anthropic is giving some firms early access to Claude Mythos to bolster cybersecurity defenses — Fortune (April 7, 2026)

- Anthropic's Claude Mythos Finds Thousands of Zero-Day Flaws Across Major Systems — The Hacker News (April 7, 2026)

- Anthropic's Project Glasswing—restricting Claude Mythos to security researchers—sounds necessary to me — Simon Willison (April 7, 2026)

- OpenAI projects $100 billion in ad revenue by 2030 — Axios (April 9, 2026)

- OpenAI Projects $100 Billion In Ad Revenue By 2030 — MediaPost (April 10, 2026)

- OpenAI Reportedly Eyes $100 Billion Ad Empire By 2030 — Benzinga (April 10, 2026)

- MiniMax Just Open Sourced MiniMax M2.7 — MarkTechPost (April 12, 2026)

- MiniMax M2.7: Early Echoes of Self-Evolution — MiniMax News (April 12, 2026)

- New MiniMax M2.7 proprietary AI model is 'self-evolving' — VentureBeat (April 2026)

- MiniMax M2.7 License (open-weight, non-commercial) — Hugging Face

- MiniMax Drops State-of-the-Art AI Agent Model—Then Quietly Changes the License — Decrypt (April 2026)

- National Robotics Week — Latest Physical AI Research, Breakthroughs and Resources — NVIDIA Blog (April 2026)

- AI breakthrough cuts energy use by 100x while boosting accuracy — ScienceDaily (April 5, 2026)

- HumanX 2026 — HumanX Conference (April 6–9, 2026)

- Proposed State AI Law Update: April 6, 2026 — JD Supra / Troutman Pepper Locke (April 6, 2026)

- EU AI Act — European Commission

- DeepSeek V4 Expected to Launch in Late April with Massive Parameter Scale — GizChina (April 10, 2026)

- 3D-LLM/VLA Workshop at CVPR 2026 — CVPR 2026 Workshop

- Beyond Attention — Post-Transformer Architectures for Physical AI — Artifocial (April 9, 2026)

- Vision Transformers Explained: From Patches to Embeddings — Artifocial (April 10, 2026)

- Spatial Architectures: From Capsule Networks to Equivariant Neural Networks — Artifocial (April 12, 2026)

- Notebook 00: SSM vs. Attention From Scratch — Artifocial Tutorials (2026-W15)

- Notebook 01: Equivariant vs. Standard Features for 3D — Artifocial Tutorials (2026-W15)

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content