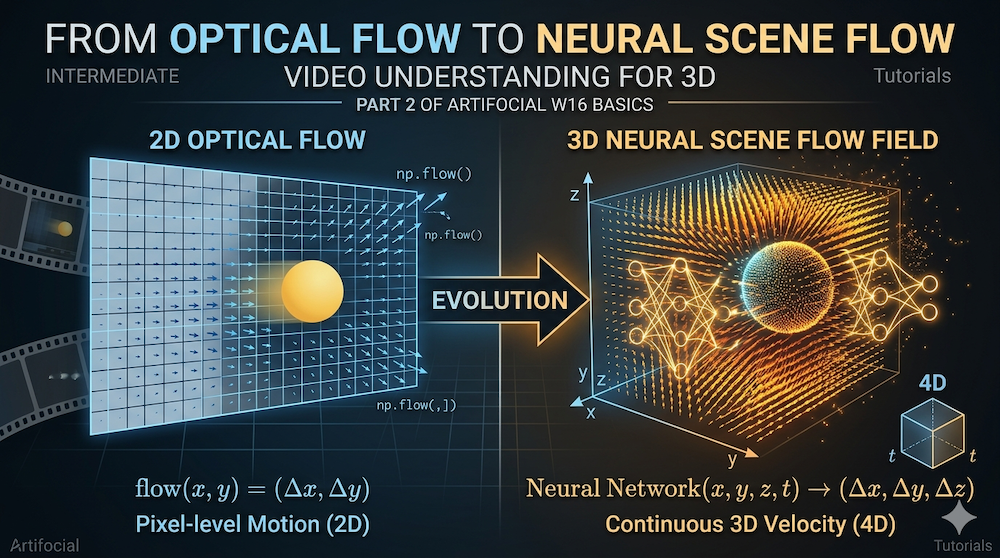

From Optical Flow to Neural Scene Flow: Video Understanding for 3D

How motion estimation evolved from pixel-level optical flow to neural scene flow fields — and why it matters for reconstructing dynamic 3D worlds from video.

Level: Intermediate | Part 2 of Artifocial W16 Basics | Research area: Dynamic 3D reconstruction

Companion Notebook

This article pairs with 01_video_to_3d_toy.ipynb, where we build a pure-NumPy video-to-3D pipeline from scratch. You'll generate synthetic moving objects, estimate scene flow, and reconstruct dynamic point clouds—all CPU-only, zero deep learning.

1. Motion Is the Missing Dimension

A photograph is a 2D slice of a 3D world frozen at one instant. With multiple photographs of the same scene from different angles—stereo pairs or multi-view sequences—you can recover 3D structure via triangulation. But what's missing? Motion.

Video is photographs over time. If you have a series of images of a dynamic scene, you have not just geometry but also the dynamics—how things move in 3D space. This is the key insight: video is a 4D signal (3 spatial + 1 temporal), and extracting that structure is the bridge from static 3D reconstruction (like classic 3D Gaussian Splatting) to dynamic 4D understanding.

The challenge is that we observe only 2D projections: pixels on a screen. We must infer both what the 3D scene is and how it moves from pixel data alone. This article traces the evolution of that problem: from classical optical flow, which captures apparent motion in 2D, through scene flow, which captures true 3D motion, to neural scene flow fields, which learn continuous 3D motion from video.

By the end, you'll understand why 4DGS deformation fields are essentially learned scene flow—and why this matters for rendering dynamic worlds.

2. Optical Flow: Motion in 2D

Optical flow is the per-pixel 2D displacement field between consecutive frames. For each pixel at position in frame , optical flow estimates how far that pixel moved to in frame :

This is a dense motion field—every pixel gets a 2D motion vector.

The foundation of optical flow rests on the brightness constancy assumption: pixels that belong to the same physical point don't change intensity as they move. In other words:

where is image intensity. This is intuitive but wrong in practice (occlusions, reflections, lighting changes), yet it remains a powerful prior.

Classical Methods

In 1981, Horn and Schunck introduced a global smoothness constraint: neighboring pixels should have similar flow. They minimize:

where is the flow field and controls smoothness. This generates dense, smooth flow but struggles at motion boundaries.

Lucas and Kanade (also 1981) took a different approach: compute flow locally within small windows, assuming constant motion within each patch. This yields sparse but robust flow at feature points.

Deep Learning Era

Deep neural networks revolutionized optical flow. FlowNet (Fischer et al., 2015) was the first end-to-end CNN for dense flow estimation. PWC-Net (Sun et al., 2018) introduced pyramidal feature extraction and warping. But the watershed moment came with RAFT (Teed & Deng, 2020), which builds correlation volumes—dense comparisons between feature maps across frames—then refines flow iteratively using a recurrent cell. RAFT remains state-of-the-art for optical flow accuracy on standard benchmarks.

The 2D Limitation

Optical flow solves a real problem: it gives you dense, accurate per-pixel motion. But here's the catch: optical flow is 2D motion in the image plane. It tells you the apparent motion of pixels, not the true 3D motion of points in the world.

Consider a point at depth moving radially toward the camera. It has real 3D velocity, but optical flow near image boundaries may be huge (the point rushes across the image plane) while near the image center it's small. A point moving parallel to the image plane contributes observable 2D flow; a point moving along the camera's line of sight contributes no optical flow at all—it only changes size or intensity.

In other words: optical flow is 2D projection. To recover 3D motion, we need 3D structure—depth.

3. From 2D Flow to 3D: Scene Flow

Scene flow generalizes optical flow to 3D. Instead of a per-pixel 2D displacement, scene flow is a per-point 3D displacement field:

This was introduced by Vedula et al. in their 1999 paper "Three-dimensional scene flow". Scene flow answers: "How did this 3D point move between time and ?"

The mathematical relationship is simple: optical flow is the projection of scene flow onto the image plane. If you know the depth at every pixel, and you have optical flow, you can lift the flow to 3D scene flow:

where is focal length, is depth, and are optical flow components. Inverting this relationship:

gives you the 2D components of scene flow. The (depth change) comes from temporal depth differences.

When Depth Is Unknown

Without depth, scene flow from monocular video is ill-posed—infinitely many 3D motions project to the same 2D optical flow.

Picture a point at that in the next frame is at — it slid straight away from the camera along the viewing ray. Its 2D projection is unchanged, so optical flow is zero. Now picture it staying put — also zero flow. Same observation, infinitely many possible 3D motions. Every pixel corresponds to a ray from the camera center; the pixel records where a point projects to, not where on the ray the point is. Motion along the ray is invisible.

You need priors: smoothness, local rigidity, planarity. Multi-view sequences help; synchronized cameras observing the same moving scene can triangulate depth and resolve ambiguities.

But here's the modern insight: you can learn scene flow end-to-end from monocular video using self-supervision.

Optical Flow vs. Scene Flow at a Glance

| Feature | Optical Flow (2D) | Scene Flow (3D) |

|---|---|---|

| Domain | Image plane | World space |

| What it measures | Per-pixel 2D displacement | Per-point 3D displacement |

| Minimum input | Two 2D frames | Frames + depth (or multi-view) |

| Key blind spot | Motion along the view ray (radial) | Underdetermined from monocular alone (needs priors) |

4. Neural Scene Flow Fields

In 2021, Li et al. published Neural Scene Flow Fields, a paradigm shift. Instead of treating scene flow as a discrete grid, they represented it as a continuous neural field:

The network learns to map any 3D position and time to the 3D velocity at that point.

Training Signal

Crucially, they trained without ground-truth 3D flow. Instead, they used three self-supervision losses:

-

Photometric consistency: Warp frame to frame using the predicted scene flow. If the warp reconstructs the next frame, the flow is likely correct. In practice this requires a differentiable warper (bilinear spatial transformer, STN-style) so gradients flow from pixel residuals through the warp back to the 3D velocity field.

-

Depth consistency: The flow and depth must be consistent—where objects move in the world, depth maps change accordingly.

-

Cycle consistency: Forward scene flow plus backward scene flow should sum to zero (or nearly so).

These losses let the network learn scene flow from raw video without human annotation.

Why This Matters

Neural scene flow fields unlock novel view synthesis of dynamic scenes from a single video. You can render the scene at any viewpoint and any time point, even where you never filmed.

More broadly, the deformation field in 4D Gaussian Splatting is essentially a learned scene flow field. Modern 4DGS methods like 4DGS by Wu et al. and Deformable 3D Gaussians by Yang et al. represent Gaussian positions that move over time. The motion between frames is scene flow; the motion field is a learned deformation. The mechanics are slightly different (continuous fields vs. discrete optimization), but the concept is identical: learn how points move from video.

5. Depth Estimation from Video

To lift optical flow to scene flow, you need accurate depth. Modern video-based depth comes in three flavors:

Monocular depth estimation uses a single frame. Networks like MiDaS, DPT (Dense Prediction Transformers), and Depth Anything predict relative depth from appearance alone. These are powerful but inherently ambiguous—a photo of a small nearby object and a large distant object can be indistinguishable.

Multi-frame depth leverages temporal consistency. Frames in a video capture the same scene from slightly different viewpoints (due to camera motion). This temporal baseline acts like stereo: you can triangulate depth from correspondence. Methods like MegaDepth and self-supervised depth learning during NeRF optimization improve monocular depth estimates using temporal information.

Structure-from-Motion (SfM) is the classical approach. Extract local features (corners, descriptors) in each frame. Match features across frames to find correspondence. Triangulate 3D points from multiple views and camera poses simultaneously. The standard pipeline is COLMAP, a mature C++ library that produces camera poses (for each frame, where was the camera and which direction was it pointing?) and sparse 3D point clouds. COLMAP is so widely used that it's the canonical input pipeline for 3DGS and 4DGS. COLMAP is the classical workhorse; recent feed-forward methods (e.g., DUSt3R, MASt3R) replace the optimization loop with a single forward pass, trading some accuracy for drastic speedups.

The Pipeline Integration

Accurate camera poses are non-negotiable for dynamic reconstruction. If your camera trajectory is wrong, your deformation field must compensate, introducing artifacts. This is why methods like BARF (Lin et al., 2021) and later 4DGS systems often refine camera poses jointly with geometry.

6. The Dynamic Reconstruction Pipeline

Let's assemble everything into the full video-to-4D pipeline that modern papers actually implement:

Step 1: Camera Pose Estimation Run COLMAP (or a learned pose estimator like COLMAP-Free methods) on your video to extract camera intrinsics and extrinsics for each frame. Output: camera trajectory + sparse 3D points.

Step 2: Initialize Gaussians Use sparse SfM points to initialize 3D Gaussian positions, scales, and rotations. This gives you a reasonable static representation of the scene.

Step 3: Optimize Gaussians and Deformation For each frame, optimize:

- Gaussian parameters: position, covariance, color, opacity (standard 3DGS)

- Deformation field: how each Gaussian moves at this timestep

- Optionally, camera poses (joint refinement)

Step 4: Regularization Add temporal smoothness losses to prevent the deformation field from oscillating chaotically. Local rigidity constraints encourage nearby points to move together. These priors make the reconstruction physically plausible.

Step 5: Render and Evaluate For any (viewpoint, time) pair, render by splatting deformed Gaussians. Compare to ground truth frames (if available) or visually inspect the result.

This is the essence of 4DGS: Gaussians at rest + learned per-frame deformations = dynamic 3D rendering.

Monocular vs. Multi-View

A single video (monocular) can reconstruct the scene, but depth ambiguity persists. Multi-view input from synchronized cameras around a performer (like volumetric capture studios) gives ground-truth depth via triangulation and produces sharper reconstructions. But monocular 4DGS is sufficient for many applications; the neural field learns to resolve ambiguities.

7. Beyond Reconstruction: Prediction

Reconstruction answers: "What did the scene look like at time ?" Prediction asks: "What will it look like at time ?"

Scene flow extrapolation is the simplest version. If you estimate scene flow from frames , can you predict the flow at ? This works well for rigid motion (assuming constant velocity) but fails for articulated or fluid dynamics.

World models take a harder path. Networks like NVIDIA Cosmos or DreamZero learn the dynamics of the visual world: given several frames, predict the next frames by learning implicit physical laws. These models effectively learn a parameterized scene flow—or a flow-like deformation—that evolves over time. The output is new video, but the internals are 3D motion fields.

Video prediction in latent space, like V-JEPA 2 from Meta, learns to predict future embeddings rather than pixels. Pixel-space prediction models famously produce blurry outputs — with uncertainty over where something will be, averaging the candidates blurs the result. JEPA-style models sidestep this by predicting in a learned latent space, where modes are sparser. You can read V-JEPA 2's future-embedding predictions as an implicit scene-flow model, operating on representations rather than pixels.

The Convergence

There's a convergence happening:

- 4DGS = static scene + learned per-frame deformations

- Neural scene flow fields = continuous 3D velocity fields trained from video

- World models = learned visual dynamics (often implicit scene flow)

All three are variants of the same core idea: learn how the world moves. The distinction is in representation (discrete Gaussians vs. continuous fields) and supervision (reconstruction loss vs. next-frame prediction). But fundamentally, they're learning scene flow.

This connects back to our W13 world models survey and the W15 SSM trend article, which discuss how temporal models (SSMs, Transformers, diffusion models) can learn and predict dynamics. Scene flow is the geometric embodiment of that learned dynamics.

Look Ahead — Event Cameras

Event cameras (DVS-style sensors) produce asynchronous per-pixel brightness-change events at microsecond resolution. They don't output scene flow directly, but they sidestep one of the entire edifice's foundations — brightness constancy — because an event is a brightness change. Scene flow from event streams is an active research area and may look architecturally different from the frame-based pipeline above.

8. What We Build in NB 01

The companion notebook takes a pedagogical approach: no PyTorch, no pretrained models. Pure NumPy.

Honest disclosure up front. NB 01 hands two things to the model as ground truth: optical flow and per-point depth. Both are computed analytically from the synthetic scene parameters rather than estimated from pixels. That means the headline "flow-aware reconstruction beats static by 29×" result is real for this setup but flattered by those inputs — the only error source is the missing in the 2D→3D lift step. The didactic target is the lifting and propagation machinery (why errors compound frame-by-frame, why global optimization beats local chasing), and isolating that machinery from flow-estimation and depth-estimation errors is what makes the drift story legible. A planned v2 follow-up will retire both cheats using pyramidal Lucas–Kanade on rendered image intensities and depth-from-flow via epipolar geometry, and report what the honest multiple actually is.

You'll:

-

Generate synthetic video: Create simple 3D objects (spheres, cubes) and animate them with known motion (translation, rotation).

-

Project to 2D: Render each frame as a 2D image using basic projection. This simulates the observation model.

-

Estimate scene flow: Given two consecutive frames and depth, compute the 3D motion field using the brightness constancy constraint. (In v1 this uses analytical flow and analytical depth — see disclosure above.)

-

Reconstruct dynamic point clouds: Build a 4D point cloud (position over time) using the estimated flow.

-

Compare reconstructions: Show how static reconstruction (ignoring flow) produces ghosting and blur, while flow-aware reconstruction is sharper and more accurate.

Everything runs on CPU and fits in under 300 lines. The point is intuition: understand the math, see the plots, run the code. This grounds the theory in concrete computation.

9. Summary

- Optical flow captures 2D apparent motion in the image plane; it's powerful but missing the third dimension.

- Scene flow extends this to 3D: per-point 3D motion in the world.

- Depth + optical flow → scene flow via simple geometric relationships.

- Neural scene flow fields learn continuous 3D motion from monocular video using self-supervised losses.

- 4DGS deformation fields are learned scene flow in practice: Gaussians move according to an optimized motion field.

- The full pipeline: COLMAP (poses) → 3DGS (geometry) → per-frame deformations (motion) → 4D rendering.

- World models and video prediction are the next frontier: moving from "what happened?" to "what will happen?"

If you're building dynamic 3D applications—volumetric video, dynamic NeRF, 4DGS—you're fundamentally learning and applying scene flow, even if you don't call it that. Understanding the evolution from optical flow to neural fields helps you debug, optimize, and innovate on these systems.

Further Reading

Core Papers

- RAFT: Recurrent All-Pairs Field Transforms for Optical Flow (Teed & Deng, 2020) — State-of-the-art dense optical flow via recurrent refinement.

- Neural Scene Flow Fields for Dynamic Novel View Synthesis (Li et al., 2021) — The seminal work on continuous neural scene flow from monocular video.

- NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis (Mildenhall et al., 2020) — The foundation for all neural 3D representations; inspired dynamic variants.

- 3D Gaussian Splatting for Real-Time Radiance Field Rendering (Kerbl et al., 2023) — Fast, efficient 3D reconstruction via Gaussian surfel splats.

- 4DGS: 4D Gaussian Splatting for Real-Time Dynamic Scene Rendering (Wu et al., 2024) — Deformable Gaussians for dynamic scenes.

- Deformable 3D Gaussians for High-Fidelity Monocular Dynamic Scene Reconstruction (Yang et al., 2024) — Another approach to per-frame Gaussian deformations.

- D-NeRF: Neural Radiance Fields for Dynamic Scenes (Pumarola et al., 2021) — NeRF + time-dependent deformation fields.

- Nerfies: Deformable Neural Radiance Fields (Park et al., 2021) — Canonical space + learned deformation for dynamic reconstruction.

Related Artifocial Articles

- Gaussian Splatting Explained (W14 Basics) — Static 3D reconstruction; the foundation for 4DGS.

- The World Models Landscape 2026 (W13) — How learned dynamics and world models connect to scene understanding.

- Post-Transformer Architectures: SSMs and Beyond (W15 Trend) — Temporal modeling and state-space models for video and dynamics.

Bonus: NVIDIA Cosmos

NVIDIA Cosmos — A world model framework for video generation and prediction. Demonstrates the production-level convergence of scene understanding, flow, and generative modeling.

Next Steps

- Read this article to understand the conceptual progression.

- Run 01_video_to_3d_toy.ipynb to implement the math in NumPy.

- Explore 4DGS repos on GitHub and try them on your own videos. See how the deformation field matches the intuitions you've built here.

- Read Neural Scene Flow Fields for the full self-supervised training procedure.

The bridge from optical flow to 4D rendering is shorter than you think. And once you see it, the whole field clicks into place.

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content