NVIDIA’s $1 Trillion Gamble & OpenAI’s Pivot

Highlights of AI News for March 16-22 2026

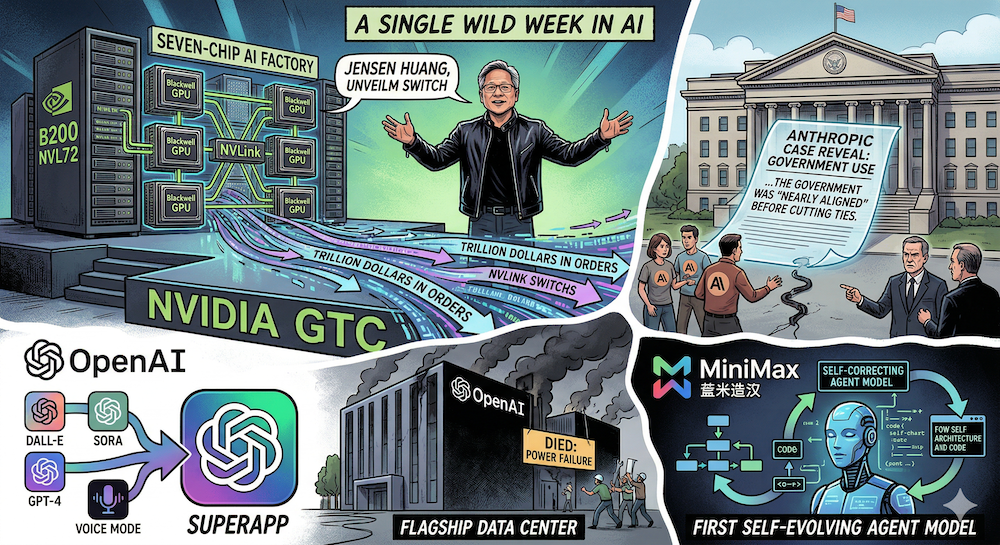

Week in Review | The week Jensen Huang unveiled a seven-chip AI factory and declared a trillion dollars in orders, Anthropic's Pentagon case revealed the government was "nearly aligned" before cutting ties, OpenAI merged everything into a superapp while its flagship data center died, and MiniMax shipped the first self-evolving agent model.

Watch our video: on Youtube or Rumble

The Big Story: NVIDIA GTC 2026 — The Trillion-Dollar Keynote

Jensen Huang's two-hour GTC keynote on March 16 was the most consequential hardware announcement of the year — seven new chips, a new computing platform, and a claim that purchase orders through 2027 will reach $1 trillion.

Vera Rubin. The successor to Blackwell is in production now. Each Rubin GPU packs 336 billion transistors, 288GB of HBM4, and 22 TB/s memory bandwidth — delivering 50 petaFLOPS of inference per chip. The Vera Rubin NVL72 rack integrates 72 Rubin GPUs and 36 Vera CPUs into a single liquid-cooled unit: 3.6 exaflops of FP4 inference compute with 20.7 TB of HBM4. This is designed from the ground up for inference and agentic workloads, not training — a clear signal about where NVIDIA sees demand shifting.

Groq 3 LPU. The first chip from NVIDIA's $20 billion Groq acquisition (completed December 2025). The Groq 3 Language Processing Unit carries 500 MB of on-die SRAM and 150 TB/s bandwidth, purpose-built for token generation. The Groq 3 LPX rack holds 256 LPUs and sits alongside the Vera Rubin system — NVIDIA now offers both GPU-based and LPU-based inference at rack scale. Expected to ship Q3.

NemoClaw. OpenClaw — the open-source agent framework created by Peter Steinberger that became the fastest-growing GitHub repo in history (250K+ stars, surpassing React and Linux) — got its enterprise moment at GTC. NVIDIA announced NemoClaw, essentially OpenClaw with enterprise-grade safety belts: the NVIDIA OpenShell runtime sandboxes every network request, file access, and inference call behind declarative policy, while Nemotron models provide local inference. NemoClaw runs on DGX Station, DGX Spark, or any dedicated platform — always-on autonomous agents with privacy and security guardrails baked in.

Open models. Nemotron 3 (Nano, Super, Ultra) — the most efficient open model family for agentic AI, with Nano delivering 4x throughput over Nemotron 2. Cosmos 2 for physical simulation, Groot 2 for humanoid robotics, and Alpamayo for autonomous vehicles rounded out the open-source releases.

Automotive partnerships. Uber will deploy NVIDIA Drive AV across 28 cities on four continents by 2028. Nissan, BYD, Geely, Isuzu, and Hyundai are building Level 4 autonomous vehicles on NVIDIA's Drive Hyperion program.

Why it matters for practitioners: The Vera Rubin + Groq 3 combination is NVIDIA's answer to the inference cost crisis. If you're running large-scale inference workloads, the LPU path offers a fundamentally different cost curve from GPU-based serving. NemoClaw is the bridge between OpenClaw's explosive community adoption and enterprise readiness — if the sandboxing and policy layer works as advertised, it removes the biggest blocker to deploying autonomous agents in production. Directly relevant to the RSI systems stack we covered in our W12 trend tutorial.

Anthropic v. Pentagon: The "Nearly Aligned" Bombshell

The Anthropic-Pentagon saga, which we've covered since W10 and W11, took its most dramatic turn yet.

On March 20, Anthropic filed two sworn declarations ahead of the March 24 hearing, and the most striking detail was a timeline contradiction: on March 4 — the day after the Pentagon finalized its supply-chain-risk designation — Under Secretary Michael emailed Dario Amodei saying the two sides were "very close" on the exact issues (autonomous weapons, mass surveillance) that the government now cites as evidence Anthropic is a national security threat. The next day, Michael posted on X that there was "no active negotiation." A week later, he told CNBC there was "no chance" of renewed talks.

The amicus briefs kept piling up. Nearly 150 retired federal and state judges — appointed by both Republican and Democratic presidents — filed in support. Catholic ethicists filed separately, arguing Church teaching supports Anthropic's position on autonomous weapons. Tech industry groups with existing Pentagon contracts called for a pause on the designation. And the earlier filings from former national security officials and OpenAI/Google DeepMind employees remain on the record.

Why it matters for practitioners: The March 24 hearing before Judge Rita Lin in San Francisco is the inflection point. If the court grants temporary relief, it signals that the supply-chain-risk designation can't be weaponized for policy disagreements — a precedent every AI company with government business will benefit from. If not, the chilling effect on setting acceptable-use boundaries is real.

OpenAI Consolidates: Superapp, Astral, and a Headcount Blitz

OpenAI made three moves this week that together signal a strategic pivot toward consolidation.

The superapp. OpenAI plans to merge ChatGPT, Codex, and its Atlas browser into a single desktop application targeting coding and business productivity. The internal framing is blunt — OpenAI's applications chief described the current moment as a "wake-up call" and told employees they couldn't afford "side quests."

Astral acquisition. On March 19, OpenAI announced the acquisition of Astral, the team behind uv, Ruff, and ty — some of the most widely used open-source Python developer tools. The plan is to integrate Astral's tooling directly into Codex. For anyone in the Python ecosystem, this is significant: Ruff has become the default linter/formatter for many projects (including ours — we use it via format_all.sh).

Headcount doubling. The Financial Times reported OpenAI plans to grow from 4,500 to 8,000 employees by year-end, with hires concentrated in product, engineering, research, and sales.

The competitive pressure driving all three moves is quantifiable: Anthropic now captures 73% of first-time enterprise AI spending, according to Ramp data. Ten weeks ago, the split was 50/50. OpenAI still leads on total revenue ($25B vs. Anthropic's $19B annualized), but the trajectory has clearly shifted.

Why it matters for practitioners: The Astral acquisition directly affects the Python developer experience. If Ruff and uv become tightly integrated with Codex, the developer workflow could become significantly smoother — but also more vendor-locked. Watch whether the tools remain truly open-source post-acquisition (Astral says yes, but so did Promptfoo). The enterprise spending shift toward Anthropic is the more important signal — it suggests Claude's product quality advantage is translating into purchasing decisions.

Stargate's Flagship Data Center Is Dead

OpenAI's Stargate project lost its centerpiece this week. Oracle and OpenAI scrapped plans to expand the flagship Abilene, Texas data center campus — a facility that would have consumed over a gigawatt of power and cost an estimated $30 billion.

The two existing buildings launched last September, with six more due this year for a total of around 1.2 GW. The expansion to 2 GW is what died. The reasons: financing disagreements between OpenAI and Oracle, OpenAI's frequently shifting demand forecasts, and a multi-day winter outage caused by liquid cooling failures that damaged the relationship between OpenAI and operator Crusoe. OpenAI also reshuffled Stargate leadership as the broader financing picture deteriorated.

Meta is reportedly in talks to pick up the excess capacity from Crusoe, with NVIDIA helping broker the deal.

Why it matters for practitioners: The Stargate collapse is a reality check on AI infrastructure ambitions. Just weeks after the $1 trillion NVIDIA order book at GTC, one of the highest-profile data center projects in history fell apart over basic execution challenges — financing, reliability, and shifting demand. For teams planning around cloud GPU availability, the takeaway is that announced capacity and delivered capacity are very different things. The irony of this happening the same week OpenAI announced a superapp and headcount doubling is hard to miss.

Google Gemini 3.1 Pro: The Quiet Frontier Update

While GTC dominated headlines, Google shipped Gemini 3.1 Pro — and the numbers deserve attention.

The headline: a verified 77.1% on ARC-AGI-2, a benchmark that evaluates ability to solve entirely novel logic patterns. Google claims more than double the reasoning performance of Gemini 3 Pro. The upgraded Deep Think mode now achieves gold medal-level results on the written sections of the 2025 International Physics and Chemistry Olympiads.

Gemini 3.1 Pro is available via AI Studio, Vertex AI, Gemini CLI, and Android Studio, with higher limits for Google AI Pro and Ultra subscribers.

In parallel, Google is moving Gemini into consumer products: natural-language spreadsheet creation in Sheets (70.5% success on SpreadsheetBench), conversational queries in Maps ("Ask Maps"), and — perhaps most consequentially — Gemini agents for the Pentagon's 3-million-person unclassified workforce.

Why it matters for practitioners: ARC-AGI-2 at 77.1% is a significant milestone — this benchmark specifically targets novel reasoning that can't be solved by pattern-matching on training data. If you're evaluating models for tasks requiring genuine generalization (not just knowledge retrieval), Gemini 3.1 Pro is worth benchmarking. The Pentagon deal for unclassified work also positions Google as the beneficiary of the Anthropic supply-chain dispute — while Anthropic and OpenAI fight over defense contracts, Google quietly walks in.

Perplexity Goes Enterprise: The AI Desktop Agent

Perplexity launched Computer for Enterprise at its Ask 2026 developer conference, and the positioning is aggressive — directly targeting Microsoft Copilot and Salesforce.

The product orchestrates 20 AI models across 400+ enterprise app integrations (Gmail, Outlook, GitHub, Linear, Slack, Notion, Snowflake, Databricks, Salesforce). Employees query @computer directly in Slack channels, with conversations continuing in Perplexity's web or mobile interface. The company claims that in internal testing of 16,000+ queries, the system completed an estimated 3.25 years of work in four weeks.

Security features include SOC 2 Type II, SAML SSO, audit logging, and sandboxed query environments. Over 100 enterprise customers reportedly demanded access over a single weekend.

Why it matters for practitioners: The multi-model orchestration approach is interesting — rather than betting on a single frontier model, Perplexity routes queries to the best available model for each task. If the 20-model orchestration actually works at enterprise scale (big if), it sidesteps the single-vendor lock-in that the OpenAI superapp strategy implies. The Slack-native interface also lowers the adoption barrier compared to standalone apps.

MiniMax M2.7: The First Self-Evolving Agent Model

MiniMax launched M2.7 on March 18 — and the headline feature connects directly to the GTC story above: this is the first production model that deeply participated in its own evolution.

The self-evolution pipeline has three core modules: short-term memory, self-feedback, and self-optimization. During training, M2.7 autonomously ran over 100 rounds of scaffold optimization on the OpenClaw framework — the same agent orchestration layer NVIDIA launched at GTC — achieving a 30% performance improvement on internal evaluations without human intervention. MiniMax claims the model can perform 30–50% of the reinforcement learning research workflow autonomously.

The benchmarks are competitive: 56.22% on SWE-Pro (matching GPT-5.3-Codex), 57.0% on Terminal Bench 2, and a 97% skill compliance rate across 40 complex agent skills in MM Claw testing. An 88% win-rate vs. M2.5 in head-to-head evaluation. MiniMax also shipped MaxClaw, an always-on managed agent built on OpenClaw and powered by M2.7.

Why it matters for practitioners: M2.7 is the most concrete real-world implementation of the recursive self-improvement loop we've been covering all week in our W12 tutorial series. The connection to OpenClaw means M2.7 agents can plug into the same ecosystem NVIDIA is building around NemoClaw — enterprise security, sandboxed execution, and multi-model orchestration. The self-evolution claim is worth watching closely: if 100 rounds of autonomous scaffold optimization genuinely works, it validates the ICLR 2026 RSI Workshop's thesis that self-improving AI systems are moving from research to production.

By the Numbers

- $1 trillion — NVIDIA's claimed purchase orders for Blackwell and Vera Rubin through 2027. The largest demand signal in semiconductor history.

- 336 billion — Transistors per Rubin GPU. 50 petaFLOPS inference per chip.

- $30 billion+ — Estimated cost of the cancelled Stargate Abilene expansion. The most expensive AI infrastructure project to die this year.

- 73% — Anthropic's share of first-time enterprise AI spending, up from 50% just 10 weeks ago.

- 77.1% — Gemini 3.1 Pro's verified score on ARC-AGI-2, a benchmark for novel reasoning.

- 100 rounds — Autonomous scaffold optimization cycles M2.7 ran during its own training, without human intervention.

- 4,500 → 8,000 — OpenAI's planned headcount growth by end of 2026.

- 150 — Retired federal and state judges who filed amicus briefs supporting Anthropic.

What to Watch Next Week

- Anthropic v. Pentagon hearing (March 24) — Judge Rita Lin in San Francisco. The temporary relief ruling could reshape government AI procurement for years.

- OpenClaw ecosystem momentum — With NVIDIA, MiniMax, and multiple partners building on OpenClaw in a single week, the next question is developer adoption. Early integrations and community feedback will signal whether this is the real agent framework standard or another consortium announcement.

- OpenAI superapp timeline — The merger of ChatGPT, Codex, and Atlas is announced but not shipped. Any developer preview or beta access will be closely watched — especially given the Stargate setback.

- M2.7 independent evaluation — MiniMax's self-evolution claims are striking but self-reported. Community reproduction of the SWE-Pro and agent skill results will determine if the recursive self-improvement loop actually works at production scale.

- DeepSeek V4 — Still missing in action. Every predicted window has passed. At this point, the absence is itself a story.

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content