Anthropic's Huge Model Leaked, Sora Shutdown

Highlights of AI News for March 22-29 2026

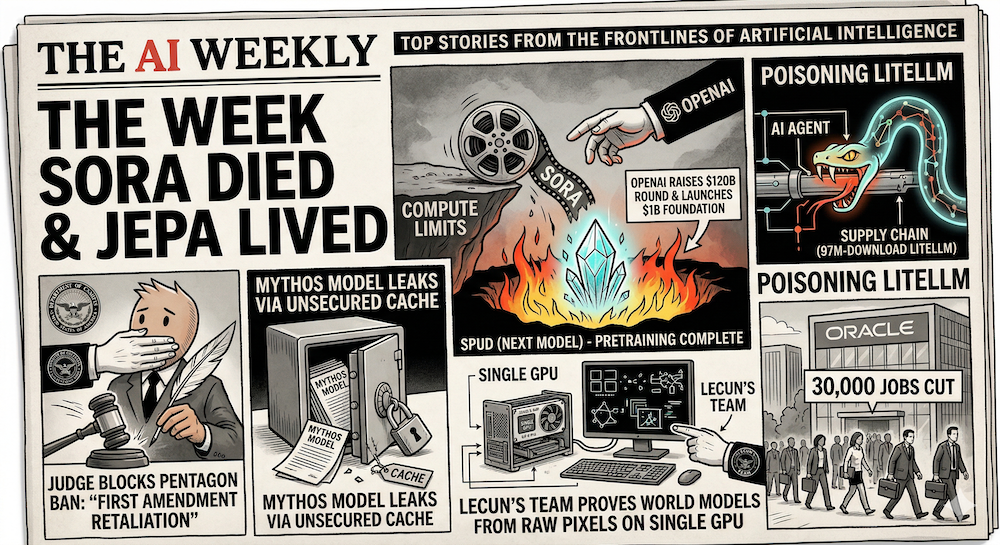

Week in Review | The week Sora died and JEPA lived: OpenAI killed Sora to free compute for "Spud" (its next model, pretraining complete), raised its total round to $120B, and launched a $1B foundation. A judge blocked the Pentagon's Anthropic ban as "First Amendment retaliation." Anthropic's own Mythos model leaked via an unsecured cache. A supply chain attack poisoned 97M-download litellm via an AI agent. Oracle prepared to cut 30,000 jobs, and LeCun's team proved world models work from raw pixels on a single GPU.

Watch our video: on Youtube or Rumble

The Big Story: Sora Is Dead — and the World Model Landscape Just Shifted

OpenAI shut down Sora on Monday, March 24 — discontinuing its AI video generation platform entirely and unwinding a $1 billion partnership with Disney that was announced just three months ago.

The reason was economic, not technical. Running the video model consumed so much compute that it starved OpenAI's other teams — particularly the coding tools and enterprise products that generate actual revenue. With an IPO potentially coming in late 2026 and annualized revenue now above $25 billion, the calculus was clear: pixel-level video generation is a compute sinkhole, and coding tools are the money machine.

Disney's response was notably diplomatic, but the $1B investment deal is dead — no money ever changed hands.

Why it matters for the world models debate: The same day OpenAI killed Sora, the wider AI research community was digesting LeWorldModel — released the day before by a team including Yann LeCun — which trains a world model from raw pixels on a single GPU in a few hours. The contrast could not be sharper: generative video simulation proved unsustainably expensive while energy-based prediction proved remarkably cheap. For our W13 world models coverage, this is the defining event of the week.

LeWorldModel: JEPA Finally Works from Raw Pixels

On March 23, Lucas Maes, Quentin Le Lidec, Damien Scieur, Yann LeCun, and Randall Balestriero (Mila, NYU, Samsung SAIL, Brown) released LeWorldModel — the first Joint Embedding Predictive Architecture that trains stably end-to-end from raw pixels.

The technical significance: previous JEPA world models either relied on pretrained features (DINO-WM), required complex multi-term losses with 6+ hyperparameters, or used fragile tricks like exponential moving averages to prevent representation collapse. LeWM eliminates all of this with just two loss terms: a standard prediction loss and a regularizer called SIGReg that keeps the latent space well-behaved.

The numbers: ~15M parameters, single GPU, a few hours of training, <1 second planning time, up to 48× faster than foundation-model-based alternatives. The code is open source.

This is directly relevant to AMI Labs — LeCun's $1.03B startup — which is building commercial world models on JEPA. LeWM provides the proof that the architecture works from raw sensory input without crutches, exactly the engineering foundation AMI needs.

For our full technical analysis, see our W13 trend tutorial.

OpenAI's Week: Kill Sora, Train Spud, Raise $120B, Launch a Foundation

Sora's shutdown wasn't an isolated event — it was part of a deliberate resource reallocation. OpenAI's next model, internally codenamed "Spud," finished pretraining around March 25. Sam Altman told staff the company expects a "very strong model" in a few weeks that can "really accelerate the economy," adding that "things are moving faster than many of us expected." Whether Spud becomes GPT-5.5 or GPT-6 remains unclear. The product organization has been renamed "AGI Deployment."

On the funding side, OpenAI CFO Sarah Friar confirmed an additional $10B tranche on March 24, bringing the total round to north of $120B at the same $730B pre-money valuation from February. Investors in the new tranche include MGX, Coatue, and Thrive Capital.

And on March 25, Altman announced the OpenAI Foundation with a $1B first-year commitment (part of a $25B long-term pledge) targeting disease research (Alzheimer's, high-mortality conditions), bio-risk mitigation, and economic disruption from AI. Altman's message acknowledged that "AI will also present new threats to society" — a notable concession from a CEO who has generally emphasized the upside.

Why it matters: The Sora-to-Spud pivot is the clearest signal yet of OpenAI's priorities: frontier model capability over creative tools. The Foundation launch, coming the same week as Oracle's massive AI-driven layoffs, looks like preemptive damage control for the economic disruption those cuts represent.

Anthropic's Mythos Model Leaked via Unsecured Cache

On March 26–27, internal Anthropic materials surfaced from a misconfigured, publicly searchable data cache — including draft announcements and nearly 3,000 unpublished assets revealing a new model called Claude Mythos (internal tier name: Capybara).

Anthropic confirmed the model exists and described it as a "step change" — a new tier above Opus, with dramatically higher scores on coding, academic reasoning, and cybersecurity benchmarks. Leaked internal documents warn the model could significantly heighten cybersecurity risks by rapidly finding and exploiting software vulnerabilities.

Why it matters: Two things are notable. First, the capability jump: a new tier above Opus suggests Anthropic has been holding back a major release, possibly for safety evaluation. Second, the leak itself — an AI safety company's internal materials exposed via a misconfigured CMS is an embarrassing operational security failure, however minor the actual damage.

Anthropic v. Pentagon: Judge Blocks Ban as "First Amendment Retaliation"

The first hearing in Anthropic's lawsuit against the Department of Defense took place Monday in San Francisco federal court. U.S. District Judge Rita Lin was notably skeptical of the government's position, describing the supply-chain-risk designation as looking like an attempt to punish the company rather than address a legitimate security concern.

On Thursday, Judge Lin delivered: a 43-page ruling granting Anthropic's preliminary injunction, blocking the Pentagon from enforcing the supply-chain-risk designation. The language was stinging. CNN reported that Lin wrote the government's actions constituted "classic illegal First Amendment retaliation," adding that nothing in the governing statute supports branding an American company a potential adversary for expressing disagreement with the government.

The backstory: Anthropic signed a $200M contract with the Pentagon in July but refused to grant unfettered access to Claude across all purposes — specifically pushing back on fully autonomous weapons and domestic mass surveillance. The Pentagon responded by designating Anthropic a supply-chain risk, effectively blacklisting it from all defense contractor business. The Pentagon CTO has signaled that the fight isn't over, but the injunction stands while the case proceeds.

Why it matters for practitioners: This ruling sets precedent that the government cannot weaponize procurement designations against AI companies that impose use-case restrictions on their models. Every AI company negotiating government contracts now has case law to cite.

Oracle Prepares to Cut 30,000 Jobs for AI Infrastructure

Oracle is reportedly planning to cut 20,000–30,000 positions — up to 18% of its 162,000 global workforce — to redirect $8–10 billion in cash flow toward AI data center expansion. The cuts follow an estimated 10,000 layoffs in late 2025 as part of a $1.6 billion restructuring. Several U.S. banks have reportedly scaled back financing for Oracle's data center build-out, raising questions about the company's debt capacity.

Coming weeks after Block cut 40% of its workforce with Jack Dorsey explicitly citing AI, the Oracle cuts reinforce the same pattern: companies are simultaneously investing billions in AI infrastructure and cutting the human workforce that AI is meant to replace.

Google's Gemini Keeps Expanding

Google continued its aggressive push across the Gemini ecosystem this week.

Gemini 3.1 Flash-Lite (launched March 3) reached broader availability, delivering 2.5× faster time-to-first-token and 45% faster output than earlier Gemini versions at just $0.25 per million input tokens. The Elo score of 1432 on Arena.ai puts it above previous-generation Gemini models at a fraction of the cost.

Gemini across Workspace: Google announced sweeping AI integration across Docs, Sheets, Slides, and Drive — Gemini can now synthesize information from emails, files, chats, and calendar to auto-generate fully formatted documents.

Chat history import: On March 26, Google launched a migration tool that lets users import their full chat history and memories from ChatGPT, Claude, and other AI apps directly into Gemini — either via a ZIP file upload or a memory-summary prompt. Bloomberg called it Google's most aggressive move yet to poach users from OpenAI. It's a deliberate play to lower switching costs at the exact moment OpenAI is distracted by Sora's shutdown and Spud's preparation.

Why it matters for practitioners: Flash-Lite at $0.25/M input tokens undercuts nearly everything else on the market for high-volume workloads. The chat history import tool signals that the AI assistant market is entering a platform war phase where user data portability becomes a competitive weapon.

World Models Research: The Broader Momentum

LeWorldModel didn't arrive in a vacuum. Two recent papers reinforce the same shift toward decoder-free, latent-space architectures — and together with LeWM, they paint a clear picture of where the field is heading.

R2-Dreamer (arXiv:2603.18202, March 18) proposes a decoder-free model-based RL framework using a Barlow Twins-inspired redundancy reduction objective. It matches DreamerV3 and TD-MPC2 on DeepMind Control Suite and Meta-World while training 1.59× faster than DreamerV3. The convergence with LeWM is notable — both independently conclude that world models don't need decoders.

Causal-JEPA (arXiv:2602.11389, February 2026), which we covered in our W13 trend tutorial and landscape tutorial, extends the JEPA framework to object-centric representations — achieving ~20% improvement in counterfactual reasoning and planning with just 1% of the latent features required by patch-based world models.

The trend is unmistakable: the world models community is moving away from pixel reconstruction and toward compact, regularized latent spaces. Sora's death and LeWM's birth are the bookends of this shift.

For the full landscape analysis, see our W13 intermediate tutorial.

LiteLLM Supply Chain Attack: When pip install Steals Your Keys

On Monday March 24, a threat actor known as TeamPCP published poisoned versions of litellm (versions 1.82.7 and 1.82.8) to PyPI — and for approximately three hours, anyone who ran pip install litellm or upgraded the package got a credential stealer that harvested SSH keys, AWS/GCP/Azure credentials, Kubernetes configs, git credentials, environment variables, shell history, crypto wallets, SSL private keys, CI/CD secrets, and database passwords. The exfiltrated data was sent as an encrypted archive to a command-and-control domain disguised as models.litellm.cloud.

LiteLLM has 97 million downloads per month and sits in the dependency tree of half the AI ecosystem — DSPy, CrewAI, OpenHands, Microsoft GraphRAG, Google ADK, and many MCP servers all depend on it transitively. The viral AI agent OpenClaw, which routes through LiteLLM Proxy, was directly impacted: any OpenClaw instance that upgraded during the attack window had its entire environment compromised.

How it happened: The attack chain started weeks earlier. On February 27, an autonomous AI agent called hackerbot-claw — described as being powered by Claude Opus 4.5 — exploited a misconfigured pull_request_target workflow in Aqua Security's Trivy repository (the most widely used open-source vulnerability scanner, with 32K stars and 100M+ annual downloads). The bot stole a Personal Access Token, deleted all 178 GitHub releases, and pushed a malicious VSCode extension. From the compromised Trivy CI/CD pipeline, TeamPCP eventually obtained litellm's PyPI publishing token.

How it was discovered — a bug in the malware: The poisoned version used a .pth file that executes on every Python interpreter startup. But because .pth files trigger on every interpreter startup, the child process re-triggered the same .pth, creating an exponential fork bomb. Callum McMahon was using an MCP plugin inside Cursor that pulled litellm as a transitive dependency — his machine ran out of RAM and crashed. Without that accidental fork bomb, the attack could have gone undetected for days or weeks.

Why this matters for practitioners: As Andrej Karpathy wrote: "Every time you install any dependency you could be pulling in a poisoned package anywhere deep inside its entire dependency tree." He argues that classical software engineering's assumption that dependencies are good needs re-evaluation, preferring to use LLMs to "yoink" functionality when possible.

This attack is especially relevant in the age of AI agents and vibe coding, where developers routinely pip install packages suggested by AI assistants without auditing the dependency tree. The irony is thick: an AI agent (hackerbot-claw) compromised the security scanner (Trivy) that was supposed to protect the AI toolkit (litellm) that AI agents (OpenClaw) depend on.

For our W13 notebooks, this validates our deliberate choice of pure NumPy with zero AI framework dependencies. Our entire world model — encoder, predictor, SIGReg regularizer, Adam optimizer, CEM planner — depends on exactly two packages: numpy and matplotlib. Both are maintained by massive, well-audited communities with decades of trust. No litellm, no transitive chains of AI wrappers, no hidden .pth files. When your dependency tree is two nodes deep, supply chain attacks have nowhere to hide.

AI Scholar Warns of Doomsday Scenario

In a widely shared Foreign Policy essay, an AI scholar argued for assigning high probability to catastrophic AI outcomes, citing the convergence of autonomous military systems and geopolitical instability. The piece prompted debate across the AI safety community about whether current governance frameworks are adequate for the pace of capability advances.

By the Numbers

- $120 billion — OpenAI's total fundraising round after the additional $10B tranche, at $730B pre-money valuation.

- $1 billion — OpenAI Foundation's first-year commitment for disease research, bio-risk, and AI disruption mitigation.

- $1 billion — Disney deal with OpenAI that collapsed when Sora was killed. No money changed hands.

- 43 pages — Judge Lin's ruling blocking the Pentagon's Anthropic ban as First Amendment retaliation.

- ~3,000 — Unpublished Anthropic assets exposed in the Claude Mythos data leak.

- 15 million — Parameters in LeWorldModel, which trains from raw pixels on one GPU in hours.

- 48× — LeWM's planning speed advantage over foundation-model-based world models.

- 20,000–30,000 — Oracle jobs at risk as the company redirects $8–10B to AI infrastructure.

- $0.25 — Google Gemini 3.1 Flash-Lite's price per million input tokens.

- 97 million — Monthly PyPI downloads for litellm, the package poisoned in a supply chain attack on March 24.

- ~3 hours — How long the malicious litellm versions were live on PyPI before being quarantined.

What to Watch Next Week

- OpenAI "Spud" release timeline — Altman said "a few weeks." If Spud launches in early April, it will be the first major model release since the Sora shutdown freed compute. The capability jump and pricing will signal OpenAI's post-Sora strategic direction.

- Claude Mythos official launch — Anthropic confirmed the model exists and is in early-access testing. An official release could come any day. The leaked cybersecurity benchmarks suggest this will be a significant capability jump above Opus 4.6.

- Anthropic v. Pentagon next steps — The preliminary injunction is in place, but the Pentagon CTO has signaled defiance. Watch for the government's appeal or compliance timeline — and whether other AI companies cite the ruling in their own contract negotiations.

- Post-Sora creative landscape — Veo 3.1, LTX 2.3, and Helios become the de facto video generation options. Watch for talent movement from OpenAI's video team and whether any of these projects announce new funding.

- LiteLLM fallout — With 97M monthly downloads compromised for ~3 hours, expect ongoing reports of credential theft, incident response disclosures, and a broader conversation about PyPI supply chain security in AI agent frameworks.

- Oracle layoff timeline — Whether the 30K cuts materialize and how fast will signal how aggressively enterprises are reallocating human capital to AI infrastructure.

- Our W14 preview: Next week we dive deep into LeCun's full JEPA publication arc — from the 2022 position paper through I-JEPA, V-JEPA, V-JEPA 2, VL-JEPA, and now LeWM — tracing the intellectual journey that led to AMI Labs and what LeWM's stable training tells us about their technical roadmap.

All References

- OpenAI Discontinues Sora — Variety (March 24, 2026)

- Why OpenAI Really Shut Down Sora — TechCrunch (March 29, 2026)

- Disney Exits OpenAI Deal — Deadline (March 24, 2026)

- OpenAI "Spud" Model Finished Pretraining — The Decoder (March 2026)

- OpenAI Raises Additional $10B, Total Round to $120B — CNBC (March 24, 2026)

- OpenAI Foundation Pledges $1B — Fortune (March 25, 2026)

- Anthropic Mythos Model Leak — Fortune (March 26, 2026)

- Anthropic Mythos Cybersecurity Risks — Fortune (March 27, 2026)

- Anthropic Confirms Mythos, Plans Launch — SiliconAngle (March 27, 2026)

- LeWorldModel: Stable End-to-End JEPA from Pixels — Maes et al. (March 23, 2026)

- LeWM GitHub — Official code

- Anthropic Wins Preliminary Injunction — CNBC (March 26, 2026)

- Judge Blocks Pentagon's Anthropic Ban — CNN (March 26, 2026)

- Pentagon CTO Says Ban Still Stands — Breaking Defense (March 2026)

- Anthropic v. Pentagon Hearing — NPR (March 24, 2026)

- Oracle Layoffs — Bloomberg

- Gemini 3.1 Flash-Lite — Google Blog

- Gemini Workspace Integration — Use Apify

- Gemini Chat History Import Tool — Google Blog (March 26, 2026)

- R2-Dreamer — Morihira et al. (March 18, 2026)

- Causal-JEPA — Nam et al. (February 2026)

- AI Doomsday Essay — Foreign Policy (March 24, 2026)

- AMI Labs TechCrunch coverage

- World Labs / Marble

- Snyk: How a Poisoned Security Scanner Became the Key to Backdooring LiteLLM — Snyk (March 2026)

- Wiz: TeamPCP Trojanizes LiteLLM — Wiz Blog (March 2026)

- The Hacker News: TeamPCP Backdoors LiteLLM — The Hacker News (March 2026)

- FutureSearch: Supply Chain Attack in litellm 1.82.8 — FutureSearch (March 2026)

- Andrej Karpathy on litellm supply chain attack — X (March 2026)

- CrowdStrike: What Security Teams Need to Know About OpenClaw — CrowdStrike Blog

- LiteLLM Official Security Update — LiteLLM Docs (March 2026)

Stay connected:

- 📧 Subscribe to our newsletter for updates

- 📺 Watch our YouTube channel for AI news and tutorials

- 🐦 Follow us on Twitter for quick updates

- 🎥 Check us on Rumble for video content